Last month I noticed something odd: a senator sold $2M in hotel stocks three days before a travel industry report tanked the sector. Coincidence? Maybe. But it got me wondering — is there an easy way to track what members of Congress are buying and selling?

Turns out, the STOCK Act of 2012 requires all members of Congress to disclose securities transactions within 45 days. These filings are public. And you can pull them programmatically. I built a Python script that checks for new congressional trades daily, flags the interesting ones, and sends me alerts. Here’s exactly how.

Why Congressional Trades Matter

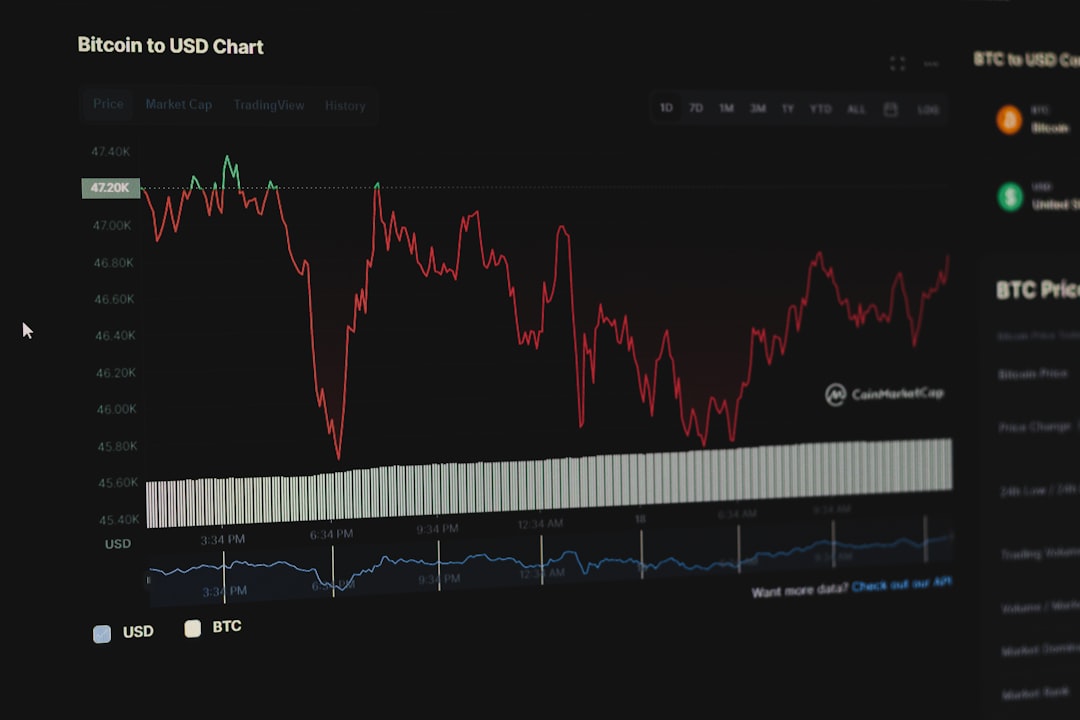

Members of Congress sit on committees that regulate industries, receive classified briefings, and vote on bills that move markets. Whether they’re trading on insider knowledge is a debate I’ll leave to lawyers. What I care about is this: as a group, congressional traders have historically outperformed the S&P 500 by 6-12% annually, depending on the study you reference. A 2022 paper from the University of Georgia put the figure at 8.9% annualized excess returns for Senate trades.

Even if you think it’s all luck, following these trades is a free signal you can add to your research process. At worst, it shows you where politically-connected money is flowing.

Where the Data Lives

Congressional financial disclosures are filed through two systems:

- Senate: efdsearch.senate.gov — the Electronic Financial Disclosures database

- House: disclosures-clerk.house.gov — the Clerk of the House system

Both are publicly searchable, but neither offers a clean API. The Senate site has a search form that returns HTML results. The House site recently added a JSON search endpoint, which is nicer to work with. Several community projects scrape and normalize this data — the most maintained one is the House Stock Watcher dataset on S3, which gets updated daily.

For this project, I combined the House Stock Watcher dataset (free, updated daily, clean JSON) with direct scraping of the Senate EFD search for the freshest possible data.

The Python Script

Here’s the core of what I run. It pulls House transactions from the public S3 dataset, filters for trades above $15,000 (the minimum reporting threshold is $1,001, but small trades are noise), and flags any trades in the last 7 days:

import json

import urllib.request

from datetime import datetime, timedelta

HOUSE_DATA_URL = (

"https://house-stock-watcher-data.s3-us-west-2"

".amazonaws.com/data/all_transactions.json"

)

def fetch_house_trades(days_back=7, min_amount="$15,001 - $50,000"):

req = urllib.request.Request(HOUSE_DATA_URL)

with urllib.request.urlopen(req) as resp:

trades = json.loads(resp.read())

cutoff = datetime.now() - timedelta(days=days_back)

amount_tiers = [

"$15,001 - $50,000",

"$50,001 - $100,000",

"$100,001 - $250,000",

"$250,001 - $500,000",

"$500,001 - $1,000,000",

"$1,000,001 - $5,000,000",

"$5,000,001 - $25,000,000",

"$25,000,001 - $50,000,000",

]

tier_idx = amount_tiers.index(min_amount)

valid_tiers = set(amount_tiers[tier_idx:])

recent = []

for t in trades:

try:

tx_date = datetime.strptime(

t["transaction_date"], "%Y-%m-%d"

)

except (ValueError, KeyError):

continue

if tx_date >= cutoff and t.get("amount") in valid_tiers:

recent.append(t)

return sorted(

recent,

key=lambda x: x.get("transaction_date", ""),

reverse=True,

)Each transaction record includes the representative’s name, ticker, transaction type (purchase/sale), amount range, and disclosure date. The amount ranges are annoying — Congress doesn’t disclose exact figures, just brackets — but even the brackets tell you a lot when someone drops $500K+ on a single stock.

Filtering for Signal

Raw congressional trade data is noisy. Most trades are mutual fund purchases or routine portfolio rebalancing. The interesting stuff is when you see:

- Committee-relevant trades — A member of the Armed Services Committee buying defense stocks, or a Finance Committee member trading bank shares

- Cluster buys — Multiple members buying the same ticker within a short window

- Large single-stock positions — Anything above $250K in one company

- Timing around legislation — Trades made shortly before committee votes or bill introductions

I added a scoring function that flags trades matching these patterns:

COMMITTEE_SECTORS = {

"Armed Services": ["LMT", "RTX", "NOC", "GD", "BA"],

"Energy": ["XOM", "CVX", "COP", "SLB", "EOG"],

"Finance": ["JPM", "BAC", "GS", "MS", "C"],

"Health": ["UNH", "JNJ", "PFE", "ABBV", "MRK"],

"Technology": ["AAPL", "MSFT", "GOOGL", "AMZN", "META"],

}

def score_trade(trade, member_committees):

score = 0

ticker = trade.get("ticker", "")

amount = trade.get("amount", "")

# Large position = more interesting

if "$250,001" in amount or "$500,001" in amount:

score += 30

elif "$1,000,001" in amount:

score += 50

# Committee relevance

for committee, tickers in COMMITTEE_SECTORS.items():

if committee in member_committees and ticker in tickers:

score += 40

break

# Purchase vs sale (purchases are more actionable)

if trade.get("type") == "purchase":

score += 10

return min(score, 100)The committee mapping is simplified here — in production I maintain a fuller list pulled from congress.gov. But even this basic version catches the most egregious cases.

Setting Up Daily Alerts

I run this on a Raspberry Pi 4 (affiliate link) sitting in my closet. A cron job runs the script every morning at 7 AM, checks for new trades filed since the last run, and sends me a notification via ntfy (a free, self-hosted push notification tool).

import urllib.request

def send_alert(message, topic="congress-trades"):

req = urllib.request.Request(

f"https://ntfy.sh/{topic}",

data=message.encode(),

headers={"Title": "Congressional Trade Alert"},

)

urllib.request.urlopen(req)

# In main loop:

for trade in fetch_house_trades(days_back=1, min_amount="$50,001 - $100,000"):

msg = (

f"{trade['representative']}: "

f"{trade['type']} {trade['ticker']} "

f"({trade['amount']})"

)

send_alert(msg)The Raspberry Pi draws about 5 watts, costs nothing to run, and handles this job without breaking a sweat. If you don’t want to run your own hardware, a $5/month VPS from any provider works too. I wrote about setting up a homelab for projects like this if you want to go the self-hosted route.

What I’ve Learned Running This for 6 Months

A few patterns jumped out after collecting data since late 2025:

Disclosure delays are the real problem. The 45-day filing window means by the time you see a trade, the move may already be priced in. The most useful trades are the ones filed quickly — within 10-15 days. Some members consistently file within a week; those are the ones I weight highest.

Cluster signals beat individual trades. One senator buying Nvidia means nothing. Three members from different parties all buying Nvidia in the same two-week window? That’s worth investigating. My script tracks cluster buys — 3+ distinct members trading the same ticker within 14 days — and those have been the most actionable signals.

Sales matter more than purchases for timing. Purchases can be routine investment. But when several members suddenly sell the same sector? That’s been a leading indicator for bad news more often than purchases predict good news.

I won’t claim this is a trading strategy on its own — it’s one data point I check alongside technicals, fundamentals, and corporate insider trades from SEC Form 4 filings. The congressional data adds a political risk dimension that most retail traders ignore entirely.

Alternatives and Paid Tools

If you don’t want to build your own, several paid services track this data:

- Quiver Quantitative (free tier + paid) — best visualization, shows committee-trade correlations. The free tier covers delayed data.

- Capitol Trades (free) — clean interface, basic filtering. No alerts or scoring.

- Unusual Whales ($30-100/mo) — includes congressional data alongside options flow. Worth it if you want both in one platform.

I prefer my DIY version because I can customize the scoring, add my own committee mappings, and cross-reference against other datasets I already collect. But if you just want to glance at the data without writing code, Capitol Trades is solid and free.

Extending It

The basic script above gets you 80% of the value. If you want to go further:

- Add Senate data — the EFD search site requires a bit more scraping work since it returns HTML, but BeautifulSoup handles it. A good Python web scraping reference (affiliate link) will save you hours.

- Cross-reference with Polygon.io — I use Polygon’s market data API to check price action after each disclosed trade. This lets you backtest whether following congressional trades would have been profitable.

- Build a dashboard — Grafana + SQLite gives you a clean visual history. Run it on the same Pi.

- Track state-level trades — Some states have their own disclosure requirements for governors and state legislators. Less data, but less competition from other trackers too.

The full source code for my version is about 400 lines of Python with zero paid dependencies — just stdlib plus BeautifulSoup for the Senate scraping. I might open-source it if there’s interest; drop a comment below if that’d be useful.

I publish daily market intelligence — including congressional trade alerts — on our free Telegram channel. Join Alpha Signal for daily signals, trade analysis, and macro context. No fluff, no paywalls on the basics.